The Evolution of Embedded AI Systems: A 2026 Engineering Perspective

From being a cost-cutting measure, cloud computing has developed into a key component. Artificial intelligence has made a significant shift from cloud computing to physical systems. Devices, from consumer electronics to industrial sensors, are becoming decision-making agents rather than merely passive data gatherers.

Performance is only one aspect of this progression; another is where intelligence lies.

The usual definition of embedded AI must be considered to understand this change. According to our previous Embedded AI Systems Guide (2024), embedded AI is the direct incorporation of machine learning models into hardware-constrained systems, allowing devices to carry out tasks like perception, prediction, and decision-making without requiring continuous cloud connectivity. Typically, these systems integrate sensors, processors, memory, and software into tightly integrated architectures tailored to specific use cases, such as consumer electronics, healthcare, and industrial automation.

Although the design, optimization, and deployment of these systems have changed dramatically due to advancements in edge computing, hardware acceleration, and real-time AI capabilities, this foundation is still applicable today.

Over 70% of AI workloads are anticipated to run at the edge in the next few years due to latency, privacy, and bandwidth limitations, according to a 2026 market outlook from Edge AI and Vision Alliance.

Simultaneously, semiconductor companies such as NVIDIA, Qualcomm, and ARM are integrating AI accelerators directly into their chipsets.

In simple terms:

If your system interacts with the physical world, it will likely require embedded AI.

This functionality was frequently restricted to specific tasks like anomaly detection, image classification, or signal processing in older versions. Nevertheless, these systems were usually built around static models with little adaptability, as explained in earlier embedded AI frameworks, demonstrating how far modern architectures have come toward dynamic and context-aware intelligence.

Contents

- Architecture Is No Longer Linear: It’s Layered and Distributed

- Hardware Realities: The Constraint-Driven Design Philosophy

- Model Design: Smaller, Faster, Smarter (Not Just Smaller)

- The Overlooked Bottleneck: Memory and Data Movement

- Real-Time Systems vs AI: A Fundamental Tension

- Power Is the Real Currency

- Performance, Latency, and the Rise of Edge Computing

- Security: The New Attack Surface

- Development Toolchains Are Finally Catching Up

- Emerging Patterns You Should Be Paying Attention To

- Practical Engineering Perspective (What Actually Works)

- Closing Insight (Conclusion)

Architecture Is No Longer Linear: It’s Layered and Distributed

Sensor-processor-output was the standard sequence for traditional embedded systems. Embedded AI breaks that simplicity.

In the past, inference pipelines in embedded AI architectures were created as preset sequences that were closely linked to hardware limitations, making them far more inflexible. The majority of systems functioned autonomously without constant feedback loops from cloud settings, and model updates were rare. These previous restrictions have clearly changed with the advent of distributed and hybrid systems.

The systems of today are similar to distributed pipelines, where intelligence is divided among:

- Preprocessing at the sensor level

- On-device deduction

- Aggregation of the gateway

- Loops for cloud retraining

This is where things start to get interesting, though: Nowadays, some systems use progressive inference, which computes partial outcomes across several phases before making a final decision.

This is especially pertinent in:

- Self-driving cars

- Intelligent production lines

- Video analytics in real time

Systems act more like cooperative networks of micro-models than a single “brain.”

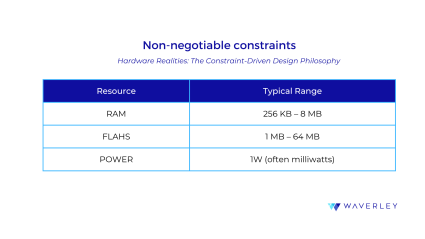

Hardware Realities: The Constraint-Driven Design Philosophy

You will quickly fail if you attempt to use embedded AI the same way you would cloud ML.In contrast to cloud environments, embedded systems are subject to unavoidable limitations:

For this reason, companies such as NXP Semiconductors and STMicroelectronics are investing heavily in developing ultra-efficient microcontrollers with integrated AI acceleration.

Rather than increasing hardware, engineers need to:

- Shrink models

- Streamline the flow of data

- Align silicon with computation

This reverses the conventional perspective, so you create your model for the hardware, not the other way around.

But it’s necessary to recap: in traditional embedded AI systems, both performance and the kinds of models that might be used were restricted by these limitations. Due to processing limitations, early implementations mostly relied on lightweight algorithms or simplified neural networks, highlighting the significance of hardware-aware design—a concept that remains fundamental but has advanced significantly.

Model Design: Smaller, Faster, Smarter (Not Just Smaller)

The goal of model optimization in embedded AI is to maximize efficiency per inference—that is, the amount of useful computation that can be performed given stringent memory, latency, and power constraints—rather than merely reducing model size, a common misconception. In order to accomplish this, modern pipelines use a variety of sophisticated techniques rather than just one: knowledge distillation transfers learned behavior from a large “teacher” model into a smaller “student” model; operator fusion minimizes expensive memory transfers by combining multiple operations into a single execution step; quantization reduces numerical precision (e.g., from FP32 to INT8 or even INT4) to shrink both memory footprint and compute cost; and structured pruning eliminates entire channels or neurons to streamline execution without compromising hardware efficiency.When combined, these optimizations can significantly affect deployment viability: memory use can be reduced by up to 8×, inference latency can be reduced by 50–80%, and, with careful tuning, accuracy can remain close to the original model. Here, toolchains like TensorFlow Lite for Microcontrollers and Apache TVM are essential because they allow models to be compiled and tailored for resource-constrained hardware targets, ensuring that optimizations are not merely theoretical but closely aligned with the underlying silicon.

The Overlooked Bottleneck: Memory and Data Movement

The majority of engineers start by optimizing computations. Skilled engineers maximize the flow of data.

Why? In embedded systems, compared to computation, accessing off-chip memory can require orders of magnitude more energy. Memory transfers, not math, frequently account for most of the latency.

Think about this: Moving data is frequently more costly than processing it.Memory bandwidth and storage constraints were among the main hurdles in early embedded AI systems; this problem has existed since then. The degree of sophistication in dealing with it has changed, with contemporary systems using hardware-aware scheduling and compiler-level optimizations to more effectively reduce data movement.

This results in particular design patterns:

- Tiled computation (data processing in segments)

- Buffering on-chip

- Pipelines for streaming inference

- Strategies for reusing weight

Data locality optimization is the primary lever for improving embedded AI performance, according to companies like ARM.

Real-Time Systems vs AI: A Fundamental Tension

Probabilistic intelligence and deterministic execution are two essentially distinct concepts that intersect with embedded AI systems. While AI models make predictions based on learned patterns, embedded systems need to ensure consistent timing and behavior, particularly in real-time domains such as automotive and healthcare. This leads to a crucial technical problem in which predictability becomes just as important as precision.

AI components are integrated into carefully regulated execution pipelines to address this. While hybrid architectures integrate AI predictions with rule-based reasoning, techniques such as latency profiling and Worst-Case Execution Time (WCET) analysis ensure that inference remains within stringent temporal bounds. In practice, this means a deterministic controller is responsible for executing the final action to ensure safety, stability, and compliance, even when an AI model detects an event.

Power Is the Real Currency

Instead of FLOPS, embedded AI measures performance in inferences per watt.

This matters because thermal restrictions limit prolonged processing, and battery-powered devices must last for days, months, or years.

Contemporary tactics include:

- Inference that is event-driven (performed only when prompted)

- Low-power, always-on cores for detecting

- Scaling of dynamic voltage

Chipmakers such as Qualcomm have developed architectures in which high-performance cores only activate when necessary, while ultra-low-power AI cores manage continual sensing. Use cases, including wake-word detection, gesture recognition, and predictive maintenance, are made possible by this.

Performance, Latency, and the Rise of Edge Computing

Latency becomes an important consideration as applications become more interactive and data-intensive. The performance demands of contemporary systems are sometimes too great for centralized cloud models, especially in domains such as IoT, autonomous systems, and real-time analytics.

For that reason, edge computing, processing that takes place closer to the data source, has emerged.

By enabling developers to run code at edge locations worldwide, platforms such as Cloudflare Workers greatly reduce latency.In the same way, geographically dispersed places can access cloud capabilities with Microsoft Azure Edge Zones. These developments show that cloud strategy is becoming spread by design rather than centralized.

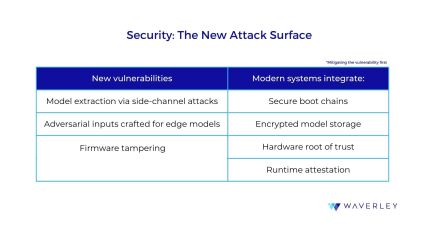

Security: The New Attack Surface

Embedding AI locally reduces cloud exposure but increases the risk of physical attacks.

Standards for hardware-level embedded system security are being developed by organizations like the Trusted Computing Group.

Security was frequently neglected in favor of usefulness and performance in older embedded AI systems. However, strong security measures—such as secure boot, encrypted model storage, and a hardware root of trust—have become essential rather than optional as these systems have grown more interconnected and vital to operations.

Development Toolchains Are Finally Catching Up

A few years ago, deploying ML to microcontrollers was painful. That’s no longer the case.

Today’s ecosystem includes:

- TensorFlow Lite for Microcontrollers: ultra-light inference runtime

- Edge Impulse: end-to-end pipeline (data – model – deployment)

- Apache TVM: deep optimization and compilation

What’s changed is not just tooling, but workflow:

- CI/CD pipelines now include model deployment

- OTA updates allow continuous improvement

- AI becomes part of the firmware lifecycle

Compared to previous workflows, in which model deployment was often a one-time procedure with few post-deployment adjustments, this marks a substantial evolution. A broader trend toward viewing embedded systems as software-defined, updatable platforms is reflected in the integration of AI into continuous delivery pipelines.

Emerging Patterns You Should Be Paying Attention To

The cloud is no longer the only location for generative AI. Now, on-device text processing, computer vision, and even multimodal capabilities with lower latency and better privacy are enabled by the direct deployment of compact, optimized models on edge devices.

Simultaneously, embedded systems are developing into entities that resemble agents. Devices can now monitor their own status, dynamically adjust behavior, and communicate with other nodes—moving toward autonomous, decision-capable systems—rather than executing predefined logic.

With the use of RISC-V, hardware is also changing. Because of this open architecture, businesses can more effectively create custom AI accelerators, lowering costs and increasing flexibility for specific use cases.

Distributed inference, in which several devices work together to execute different model components, is another significant advancement. Intelligence is distributed throughout a network, making the system more durable and scalable than depending on a single device or the cloud.

These advancements demonstrate a shift from isolated intelligent gadgets to cooperative, networked intelligence by expanding the fundamental capabilities outlined in previous embedded AI systems into more flexible, scalable, and linked ecosystems.

Practical Engineering Perspective (What Actually Works)

This is what continuously stays true in actual deployments after all the theory:

- Prioritize limitations above desire.

- Profile every aspect (power, memory, latency).

- There is no ideal model, so expect trade-offs.

- Include backup logic at all times.

- Prepare for updates right away.

And, most crucially, your model doesn’t fit if it barely fits. These ideas align with well-known approaches such as the embedded software development process, which emphasizes the importance of organized design, validation, and iteration in creating dependable and expandable systems.

Closing Insight (Conclusion)

It’s about precision under pressure

From the basic ideas presented only a few years ago, embedded AI has come a long way. Designing fully integrated, adaptive, and distributed intelligent systems has replaced the previous focus on providing simple on-device inference under stringent limitations. Although the fundamental ideas, efficiency, hardware awareness, and real-time execution, remain the same, these systems’ size, complexity, and capabilities have significantly increased.

In contrast to previous methods, contemporary embedded AI is now characterized by how well such restrictions are used to create efficient, production-ready solutions rather than just by their limits. Embedded AI is already a scalable and strategic component of digital products, thanks to developments spearheaded by companies like Qualcomm and ARM, as well as established frameworks such as TensorFlow Lite for Microcontrollers.

These days, it’s clear that companies need to focus on co-designing hardware and intelligence from the ground up rather than merely incorporating AI into devices. The most successful people will not be those with the most intricate models, but rather those who are most adept at working within limitations, demonstrating that, more than ever, innovation at the edge is fueled by accuracy under duress.

Ready to bring intelligence to your devices?